Tech Giants Announce Major AI Ethics Initiative

A coalition of major US technology firms has committed $1$ billion to a new, independent foundation dedicated to developing responsible AI practices.

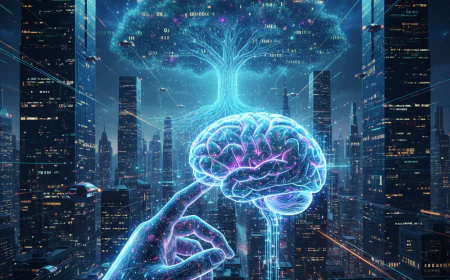

In a groundbreaking move aimed at shaping the future of artificial intelligence (AI), a coalition of global leaders, technologists, and ethicists has announced the launch of a comprehensive initiative designed to set universal standards for the ethical development of AI technologies. This initiative focuses on three core principles: transparency, bias mitigation, and human oversight—critical elements that are increasingly essential as AI systems become embedded in various facets of everyday life.

The initiative, which is backed by a consortium of governments, tech companies, and academic institutions, is poised to address the ethical dilemmas presented by rapid advancements in AI. The founders believe that by establishing a framework of ethical guidelines, they can help mitigate risks associated with AI deployment while promoting responsible innovation.

Transparency as a Cornerstone

One of the primary tenets of the initiative is transparency. As AI systems often function as "black boxes," understanding how these technologies operate and make decisions is imperative. The initiative emphasizes the need for AI developers to disclose algorithms, data sources, and decision-making processes. This transparency will not only foster trust among users but also enable stakeholders to scrutinize AI systems for fairness and accountability.

“Transparency is fundamental to ensuring that AI serves humanity and does not operate in obscurity,” said Dr. Emily Chen, a lead researcher in AI ethics at the initiative's headquarters. “By demystifying how AI systems work, we empower users and developers alike to engage in informed discussions about their implications.”

Technical Evolution and Creative Innovation

The innovation showcased in this recent development highlights the transformative potential of modern digital tools in reshaping audience engagement. Industry leaders argue that the integration of advanced predictive analytics and human-centric design is no longer a luxury but a necessity for staying relevant in a competitive market. This shift also raises important ethical questions regarding data privacy and the role of automated decision-making in creative processes. As technology continues to bridge the gap between imagination and reality, the standards for quality and authenticity are expected to evolve significantly by 2026.

Article written by: Sophia Chen

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0

:max_bytes(150000):strip_icc():format(webp)/iphone-17-pro-d7ae6571d5b147ab8312e83b4d30a5a9.jpg)